The AI Reframe

Why AI Will De-platform Physicians Who Refuse to Lead It

The drumbeat is getting louder. AI is everywhere. The discourse swings from predictions of the job apocalypse, to elations about AI-enabled early detection of deadly diseases such as pancreatic cancer, to calls to replace radiologists, and even projections on the timeline of physicians displacement. This all comes against the backdrop of an unease with the AI boom, thrusting us into “too much” and “happening too fast”, well captured in this piece from the Atlantic. In medicine, a recent conclusion reached by Dr. Eric Topol is that medical AI presents a paradox in implementation: well-documented uses in medical imaging with solid outcomes lag behind while LLMs are used widely but lack outcomes.

Where does all of this leave a practicing clinician? As someone who walks the walk of integrative cardiology practice AND physician entrepreneurship, I dug into this question to prepare for my talk at the AIC 2026 in San Diego. I did what I always do: I sat with the data. I read the papers, traced the citations, looked at what was actually happening in clinical settings — not the press releases, not the vendor decks, and not the hype. What I found was not the story of AI triumphant. It was a story of AI unmanaged. And unmanaged AI, in medicine, is not a productivity tool. It is a liability.

I am going to make a claim that may feel counterintuitive, especially if you’ve spent the last year watching colleagues bolt AI tools onto every corner of their practice. Here it is: The physicians who are most at risk of being de-platformed by AI are not the ones who ignore it. They are the ones who are refusing to lead it.

Let me explain.

The Problem Is Not the Tool

At AIC, I will open with what I call Exhibit A. It isn’t a research paper. It is a screenshot: a physician Facebook group post where a community member shared a detailed prompt provided by her patient, instructing others to consider using it. The prompt called for uploading the patient’s lab PDFs directly into a consumer AI chatbot — including the patient’s age, sex, clinical status, and real Cleveland Heart Labs data, with an intent to derive a ‘cardiovascular comparison score for untreated patients in their 60s”. The post got 19 comments, 9 reactions, and zero warnings.

When I asked the physician who posted how she felt about it, she responded by saying that her “savvy patients” were “increasingly using AI to check their care plans, supplement-drug interactions, and even offer some diagnosis for unusual symptoms.” She said she preferred to educate them on how not to get themselves in trouble, rather than “gatekeeping.”

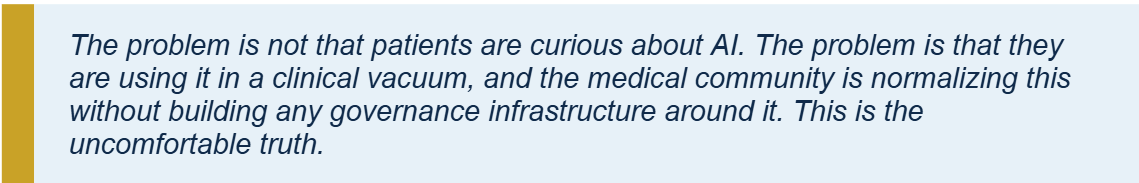

The framing was wrong. Not the instinct to educate — that part is right. But the implicit premise that what her patients were doing was simply an empowerment story. What they were actually doing was uploading Protected Health Information to a platform with no HIPAA Business Associate Agreement, no data retention policy, and no clinical governance. And she, as their physician, retained legal and ethical responsibility for the outcome.

This is not a patient education problem. It is a physician leadership problem. Patients are going to use whatever tools are available to them. They always have. The emergence of “citizen doctors” is a positive externality unleashed by the generative AI radical innovation. What they need from us is not permission — they don’t need our permission. What they need is a physician who has built a system that incorporates AI responsibly, so that the AI their patients encounter inside the clinical relationship is governed, contextualized, and safe.

The Data We Have to Sit With

Let me give you the numbers, because the numbers are worth sitting with.

The first number is the hallucination rate. When Mount Sinai researchers presented large language models with fabricated clinical details, the models elaborated on those fabrications in 50 to 82 percent of cases. They did not reject the false premise. They built on it. They generated highly convincing clinical narratives from thin air.

The second number is the disclaimer collapse. In 2022, AI systems responding to health queries included medical disclaimers in roughly a quarter of responses. By 2025, that figure had dropped to under one percent. The Stanford/Berkeley/UBC preprint found a striking inverse correlation (r = −0.64) between a model’s diagnostic accuracy and the likelihood it would include a disclaimer. The better the model performs, the less likely it is to warn you about its limitations. The safety net is vanishing precisely when the stakes are highest.

And then there is the Harvard Business Review’s eight-month study on AI and productivity — the one that generated a politely uncomfortable finding. AI did not reduce work. It intensified it. Workers absorbed tasks outside their expertise because AI made them feel “newly accessible.” They extended their hours because the cognitive burden of reviewing and correcting AI-generated output required more time, not less. The researchers called it the Productivity Paradox.

In medicine, this is not abstract. The physician who uses a generative chatbot to draft a patient summary now owns that summary. Every error in it. Every hallucination it contains. And the patient who uploads their own lab values to an ungoverned AI system and gets back a fabricated cardiovascular risk score and then shares it with their doc — they are your patient. The liability does not transfer to OpenAI or Anthropic or whoever built the chatbot. It is your responsibility to review the AI slop output-all of its fabricated probabilistic cardiac scoring-and educate your patient as well as provide the deterministic scores and guideline-supported risk estimates, the crux of current cardiac risk evaluation. So this doubles your work and raises your liability.

The Real Displacement Risk

Here is the reframe I want you to take out of this article.

You will not be replaced by AI. That framing — AI replaces the doctor — is a distraction. It mislocates the actual threat. The actual threat is more specific: you will be displaced by the physician down the street who has built an AI-native practice, while you are still using AI as an add-on to a workflow designed in 2015.

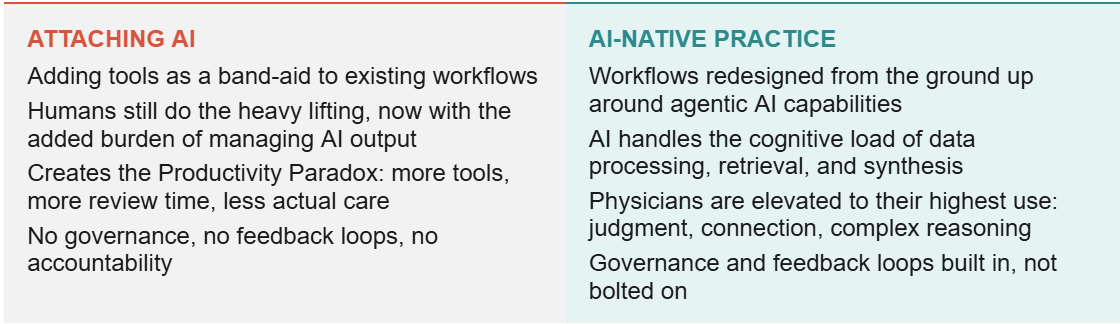

There is a critical distinction here, and I will make it explicitly at AIC because I think most physicians have not fully understood it yet. There is a difference between attaching AI to your practice and building an AI-native practice.

ATTACHING AI

Adding tools as a band-aid to existing workflows

Humans still do the heavy lifting, now with the added burden of managing AI output

Creates the Productivity Paradox: more tools, more review time, less actual care

No governance, no feedback loops, no accountability

AI-NATIVE PRACTICE

Workflows redesigned from the ground up around agentic AI capabilities

AI handles the cognitive load of data processing, retrieval, and synthesis

Physicians are elevated to their highest use: judgment, connection, complex reasoning

Governance and feedback loops built in, not bolted on

Attaching AI means you have a chatbot answering patient messages, a transcription tool sitting in on visits, and a summarization widget at the end of the note. These tools exist in parallel to your workflow. When they fail, and they will fail, you catch the failure manually. You are now doing your original job plus reviewing AI output. This is the Productivity Paradox in clinical form.

Building an AI-native practice means starting from a different question: not “which AI tool can I add to what I already do?” but “what would this practice look like if I designed it today, knowing what AI can and cannot do?” The answer requires mapping your actual clinical workflows — not the org chart version, the real version, with all the workarounds and handoffs and hidden judgment calls — and identifying precisely where agentic AI adds value and where it creates risk.

The Framework I’m Using

At AIC I will introduce a framework I’ve been developing for my own practice and for the physicians I work with through Dr.MBA.AI. This framework is called A.G.E.N.T., and I learned of it at the Harvard Data Science Institute Agentic AI In Healthcare course. It is also very convenient given that agentic AI is the paradigm we’re moving toward, but the acronym is genuinely functional:

Align — Start with clinical outcomes, not AI capabilities. What are you trying to achieve for patients? Design the AI system around that.

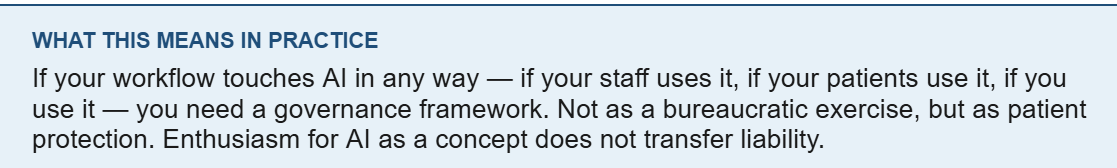

Govern — Establish explicit consent frameworks, HIPAA-compliant data handling, clear disclosure requirements. Governance is not bureaucracy; it is patient protection.

Empower — Build your team’s cognitive infrastructure before deploying new tools. The skill of critically evaluating AI output — what the researchers call “mindware” — is learnable, but it has to be deliberately taught.

Navigate — Map your clinical work graph. Identify exactly where AI accelerates value and where AI hallucinations introduce unacceptable risk.

Trust, but verify — Design graceful escalation into every workflow. When the AI encounters ambiguity or high-risk scenarios, it escalates to a human. Not sometimes. Every time.

What I want to emphasize about this framework is the order. Most physicians start at Empower (what tool do I train my staff on?) or Navigate (where can AI fit in my workflow?). The physicians who are building durable AI-native practices start at Align. They define the clinical outcome first. Then they build backward.

The functional medicine framework is useful here because we already think in systems. We understand networks, feedback loops, and root causes. Those are exactly the mental models required for responsible AI integration. You are not starting from zero.

What the Reframe Actually Changes

The reframe I’m asking you to make is not about AI at all. It is about leadership.

The physicians who are going to be displaced by AI are the ones who outsource the AI decisions to whoever happens to sell them a tool, who treat consent as a checkbox rather than a protocol, who never map their workflows because they don’t have time, who assume the liability is somewhere else. Those physicians are not being replaced by machines. They are being outcompeted by colleagues who understood that AI is a practice design problem, not a software problem.

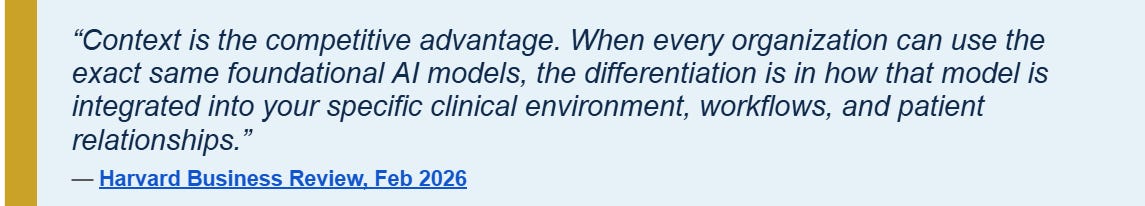

The physicians who will not be displaced are the ones who refuse to treat AI as a passive tool and instead treat it as a clinical team member that needs to be onboarded, trained, corrected, and governed. Who understand that the competitive advantage is not which AI model you use — every practice can access the same foundational models — but the clinical context you have built around it.

Your longitudinal patient relationships. Your integrative, specialized, longevity or functional medicine systems biology lens. Your specific clinical protocols and values. Your outcomes. The AI model is a commodity. Your context is not.

That is the reframe. Not “will AI replace me?” but “is my practice designed to lead AI, or just react to it?”

In the next article in this series, I’ll show you what that looks like in practice. Specifically: what happened when I ran my first AI build-a-thon for physicians, what we built, what broke, and what I’d do differently.

NEXT IN THIS SERIES FOR PAID SUBSCRIBERS

No. 2 — Build, Ship, Learn: Reflections on Running My First AI Build-a-Thon for Physicians. How we built a functioning supplement storefront in a four-hour live session, what the economics actually look like, and what I learned about physician readiness for AI implementation. Get access to curated resources in Dr. MBA Consulting Community with paid subscription.